Amazon now expects around $75 billion in capital spending in 2024, with the majority on technology infrastructure. On the company’s latest earnings call, chief executive Andy Jassy said he expects the company will spend even more in 2025.

This represents a surge on 2023, when it spent $48.4 billion for the whole year. The biggest cloud providers, including Microsoft and Google, are all engaged in an AI spending spree that shows little sign of abating.

Amazon, Microsoft, and Meta are all big customers of Nvidia, but are also designing their own data center chips to lay the foundations for what they hope will be a wave of AI growth.

“Every one of the big cloud providers is feverishly moving towards a more verticalized and, if possible, homogenized and integrated [chip technology] stack,” said Daniel Newman at The Futurum Group.

“Everybody from OpenAI to Apple is looking to build their own chips,” noted Newman, as they seek “lower production cost, higher margins, greater availability, and more control.”

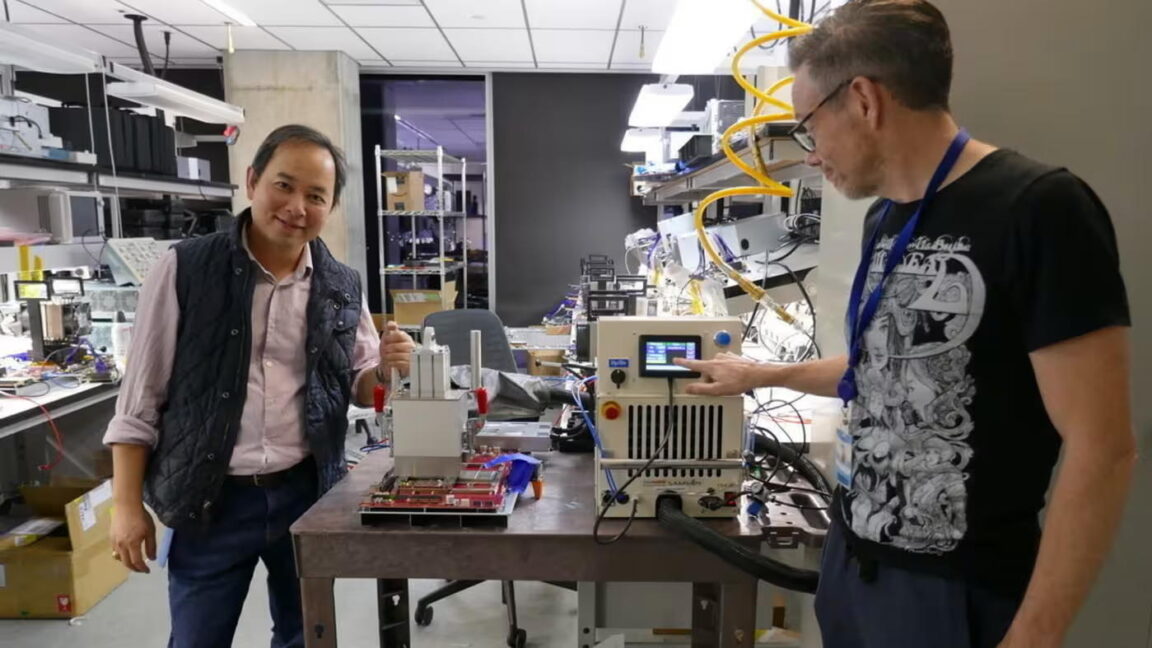

“It’s not [just] about the chip, it’s about the full system,” said Rami Sinno, Annapurna’s director of engineering and a veteran of SoftBank’s Arm and Intel.

For Amazon’s AI infrastructure, that means building everything from the ground up, from the silicon wafer to the server racks they fit into, all of it underpinned by Amazon’s proprietary software and architecture. “It’s really hard to do what we do at scale. Not too many companies can,” said Sinno.

After starting out building a security chip for AWS called Nitro, Annapurna has since developed several generations of Graviton, its Arm-based central processing units that provide a low-power alternative to the traditional server workhorses provided by Intel or AMD.